Singapore Sign Language Detection

Deep learning model for real-time Singapore Sign Language recognition using computer vision and LSTM neural networks

Task

Build a real-time sign language detection system for Singapore Sign Language using deep learning

The Deaf community in Singapore faces significant communication barriers in daily interactions. While there are approximately 5,000 Deaf individuals in Singapore using Singapore Sign Language (SgSL), the general public has limited ability to communicate with them. Existing sign language recognition solutions are often:

- Not optimized for SgSL (Singapore’s native sign language)

- Limited in real-time performance on consumer hardware

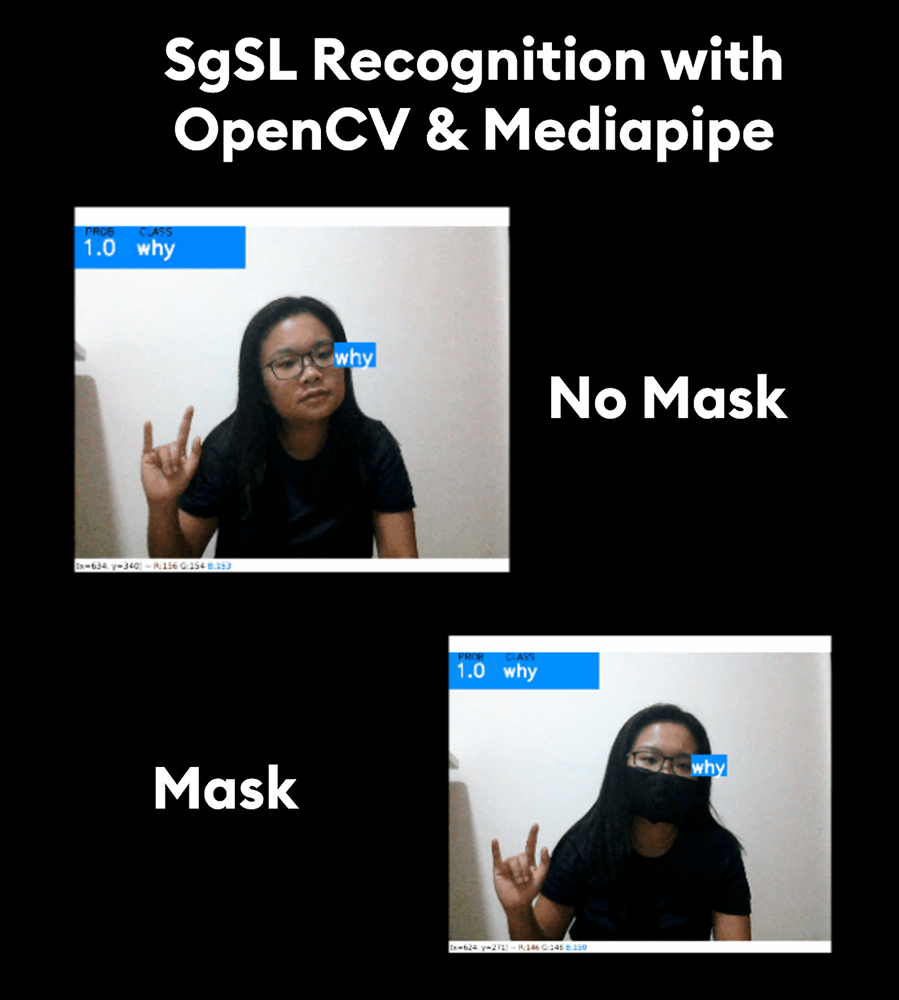

- Unable to handle masked faces (relevant post-COVID)

- Requiring expensive specialized equipment

The goal was to build an accessible, real-time sign language detection system that works on standard webcams and handles both masked and unmasked scenarios.

Computer Vision Pipeline:

- MediaPipe Holistic: Real-time pose detection extracting keypoints from face, hands, and body landmarks

- OpenCV: Video capture and preprocessing for frame extraction

- Feature Engineering: Normalized landmark coordinates for position-invariant recognition

Deep Learning Model:

- Architecture: LSTM (Long Short-Term Memory) neural network for sequential gesture recognition

- Input: 30-frame sequences of landmark keypoints

- Optimization: Keras Tuner for hyperparameter optimization

- Performance: Optimized for both CPU and GPU inference

Data Collection: Captured diverse sign language gestures with both masked and unmasked subjects to ensure model robustness across real-world scenarios.

Feature Engineering: Extracted 468+ keypoints from face, pose, and hand landmarks using MediaPipe. Applied normalization to ensure position and scale invariance.

Model Training:

- Designed LSTM architecture for temporal sequence recognition

- Trained on 30-frame sequences to capture gesture dynamics

- Used Keras Tuner to optimize layer sizes and dropout rates

- Evaluated performance on CPU vs GPU environments

Frame Rate Optimization: Implemented efficient processing pipeline to maintain real-time performance (30 FPS) while running pose estimation and inference simultaneously.

Mask Compatibility: Enhanced model robustness for masked scenarios by focusing on hand and upper body landmarks when facial features are obscured.

Resource Constraints: Optimized model for CPU performance to ensure accessibility on standard laptops without requiring GPU hardware.

Sequence Recognition: Developed custom algorithms for continuous gesture detection, distinguishing between intentional signs and transitional movements.

This project provided valuable insights into real-world applications of computer vision in accessibility. Key learnings include:

- Real-time ML constraints: Balancing model complexity with inference speed for live applications

- Deep learning optimization: Techniques for model compression and hardware-specific tuning

- Inclusive design: Importance of considering diverse user scenarios (masked/unmasked, different lighting)

- Accessibility tech: Understanding how technology can bridge communication gaps for underserved communities

While the project was a personal learning exercise, it highlighted the potential for technology to create more inclusive environments for the Deaf community in Singapore.