I spent years as a consultant telling stories with data...

...now, I engineer the

systems that make those

stories possible

How I work. To me, a project isn’t done when the code runs. It’s finished someone makes a better decision because of it. I love owning the whole journey. From architecting the backend to pitching the final demo in the boardroom, I bridge the gap between the terminal and the stakeholders to ensure the tech doesn’t just run, but actually lands with the users. Not because I can’t specialize, but because I believe the best systems are built by people who understand every layer.

I build the plumbing

Turning messy data swamps into trusted, single-source-of-truth systems. I’ve stood up AWS environments, automated ETL pipelines that replaced 2 hours of manual Excel pain with a 5-minute script, and maintained the certified data sources behind dashboards serving 1,300+ users.

I make AI earn its keep

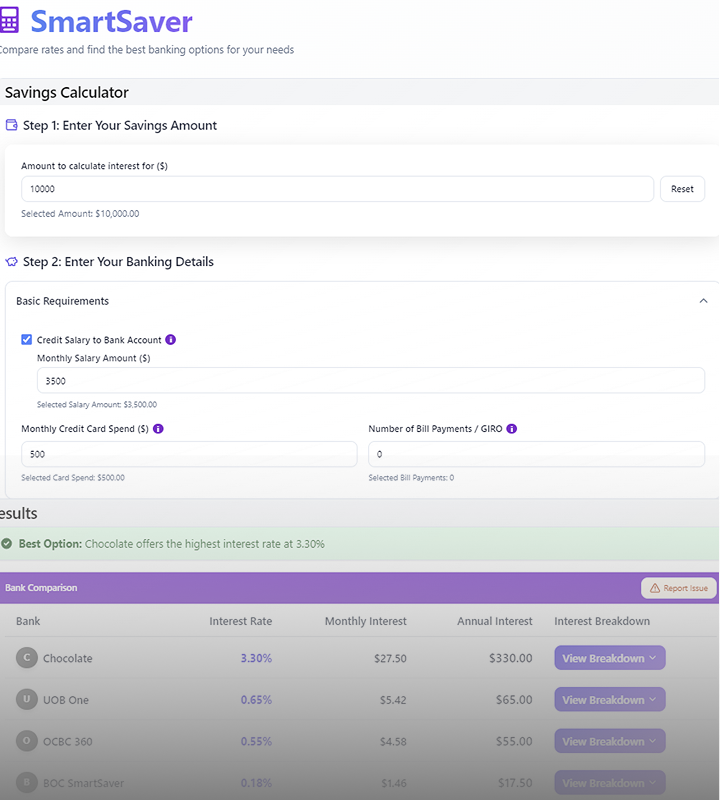

Problem first, technology second. Unlike traditional software, AI doesn’t scale for free. Every API call costs money. Every token adds up. So I think hard about cost structure from day one. I designed a Text2SQL engine that lets non-technical colleagues query databases in plain English. Not because LLMs are cool, but because the alternative was a 3-day turnaround from an analyst for every questions.

I translate for both the Boardroom and the terminal

A decade of consulting taught me that the best technical work is useless if nobody understands it. I’ve presented to 1,000 delegates at an international congress, trained teams on AWS and SQL, and built dashboards that drove 138% higher engagement than platform average. I’m equally comfortable in the terminal and the boardroom and I know which room needs which language

Straddling across data, tech and consultancy

into strategic business value

Data & Machine Learning

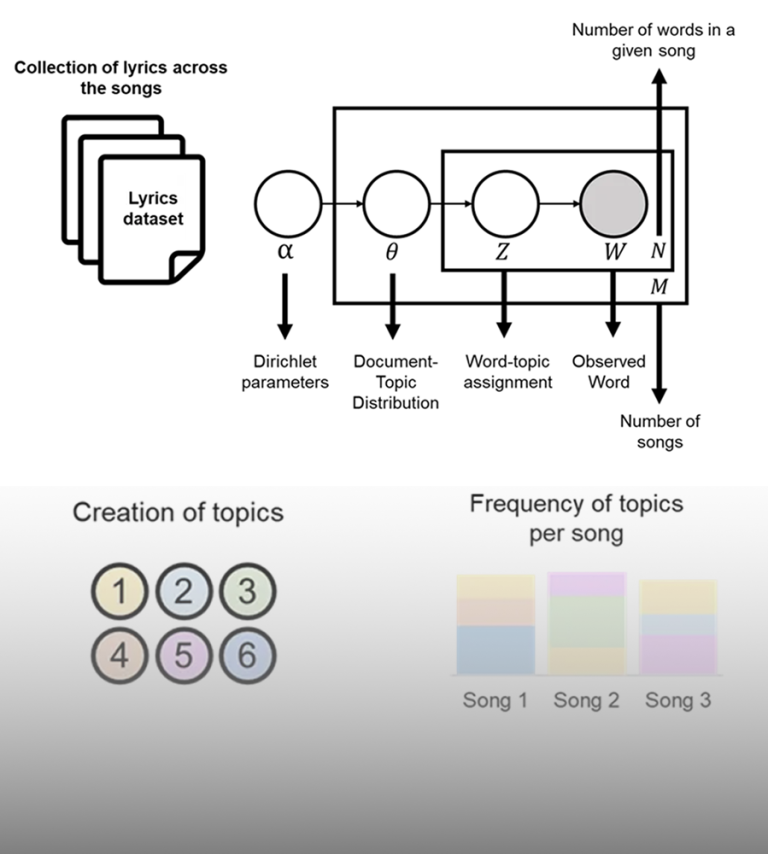

- Built hybrid BERT/GPT-4 deployment architecture achieving GPT-4 accuracy at 16% less cost/li>

- Engineered ARIMA forecasting system for housing analysis (1-2% MAPE vs industry standard 5-12%)

- Designed NLP pipeline processing 30+ years of policy documents using Python and OpenAI API

of automated innovation

Tech & Architecture

- Architected a security-first AWS cloud data environment with automated data quality frameworks that was built to enterprise compliance standards from day one

- Engineered ETL/ELT pipelines using PySpark and SQL, reducing processing time from 2 hours to 5 minutes (96% improvement)

- Designed Text2SQL2Chart pipeline using LLM for natural language data access

into strategic business value

Strategic Consultancy

- Led Analytics practice exceeding revenue targets by 5%

- Managed $2M research projects for global industry clients

- Presented insights through interactive visualizations and client workshops

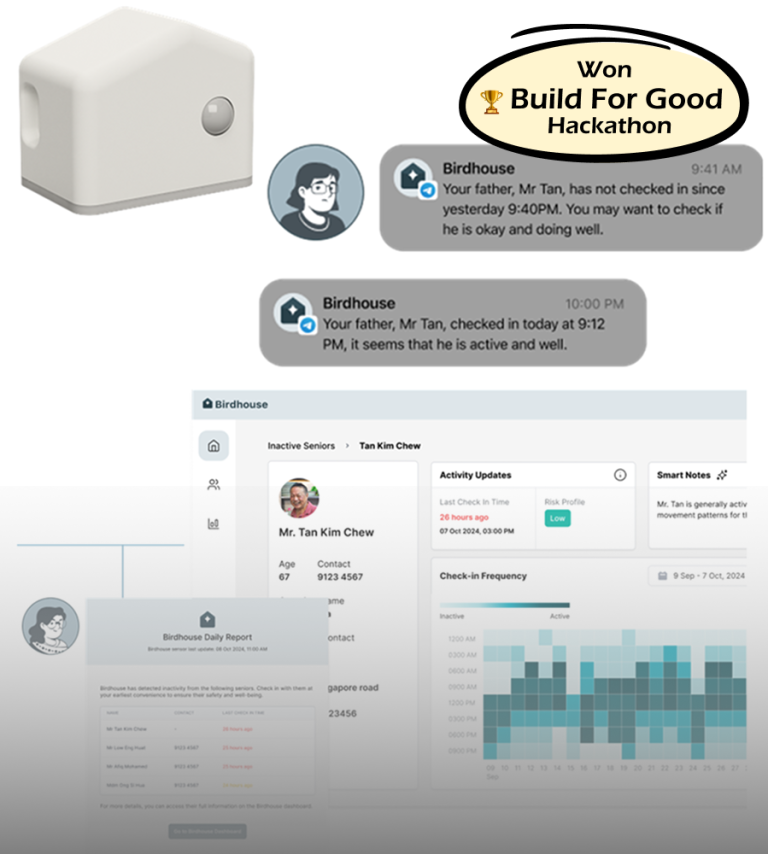

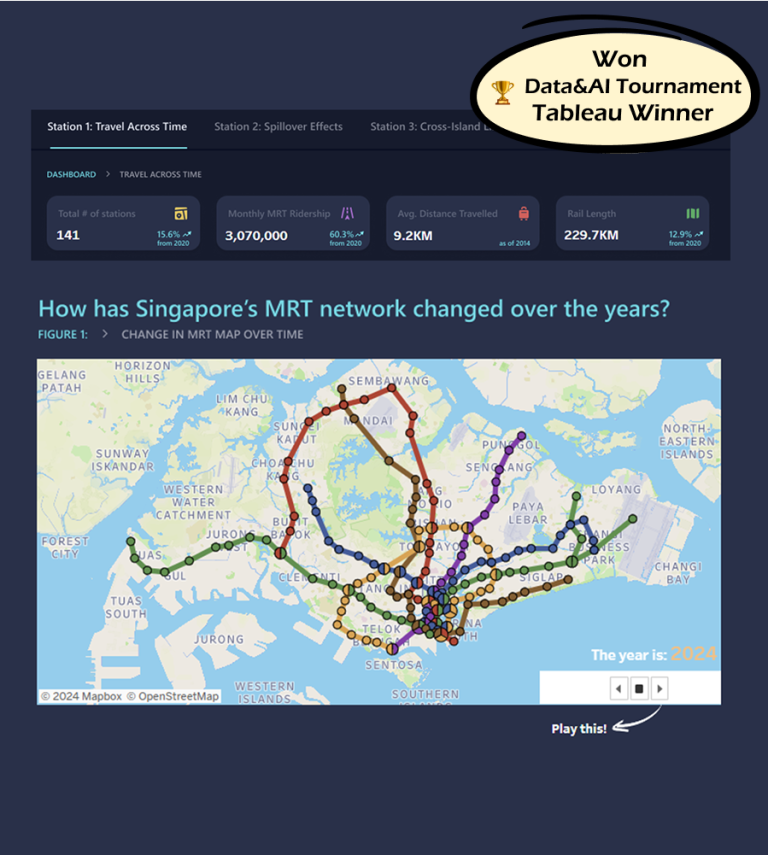

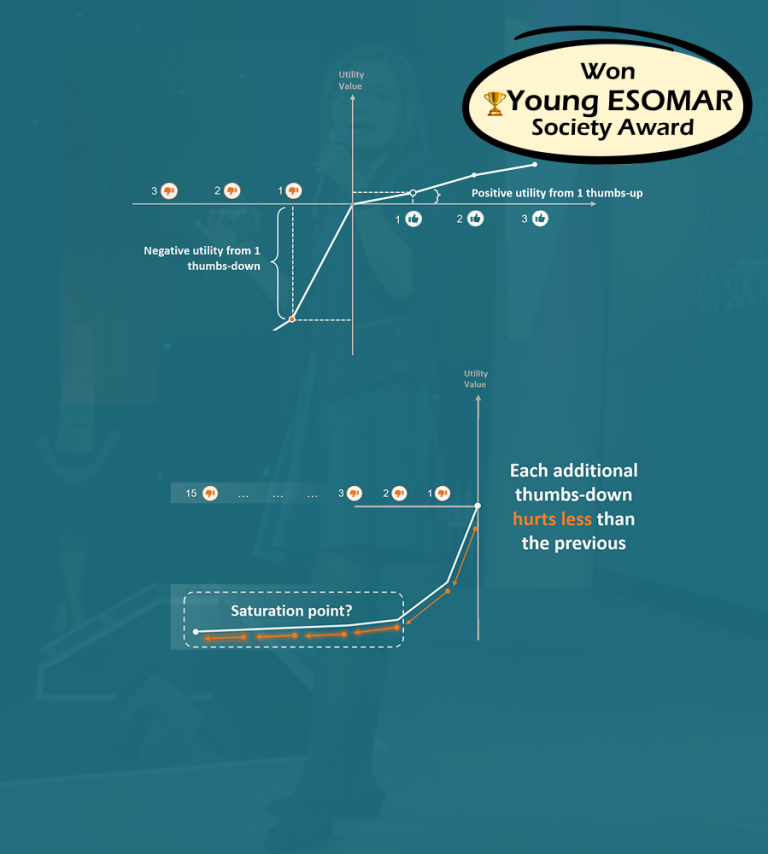

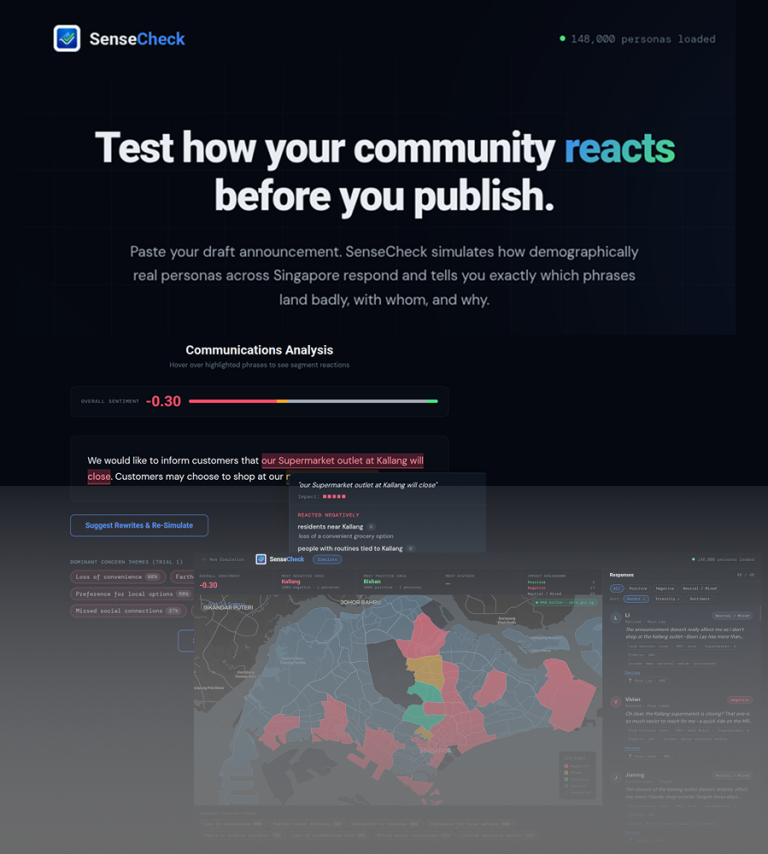

Things I've Built

I believe the best way to learn is to do. “A mix of production systems, competition winners, and weekend experiments. Some projects are anonymized for confidentiality but the engineering is real.

Debby's

Education

Masters of Science in Computational Data Analytics

Georgia Institute of Technology

Technical Certificates

AWS Certified Data Engineer Associate (Certificate)

AWS Certified Solutions Architect (Certificate)

Microsoft Certified Azure Data Engineer (Certificate)

Microsoft Certified Power BI Data Analyst (Certificate)

Product Certificates

Scrum Alliance Certified Scrum Product Owner® (Certificate)